Projects

Current Projects

- Accuracy of Sound Level Meter Applications for iPhone and Android Cell Phones

- Comparison of Monitored Live Voice versus Computer-Assisted Presentation of Word Recognition

Accuracy of Sound Level Meter Applications for iPhone and Android Cell Phones

The focus of this research is to determine the accuracy of cell phone sound level meter applications that are available for all users and their use for consumer purposes and potential research measures. Previous studies have investigated the accuracy of sound level meter applications across different phone manufacturers; however, many of these studies were performed several years ago. Given recent technological advancements, the need for updated information on the integrity of these applications remains. This study is evaluating the accuracy of two cell phone applications based on whether they are calibrated or uncalibrated and whether the internal or external microphone is used. Determining the reliability and objectivity of these applications will help verify if they can be used confidently in research settings.

Comparison of Monitored Live Voice versus Computer-Assisted Presentation of Word Recognition

The focus of this research is to replicate the findings from Mendel and Owen (2011) regarding the time it takes to administer word recognition testing using monitored live voice (MLV) versus computer-assisted technology. Mendel and Owen (2011) showed that there was no clinically significant difference in test time using MLV and CD recordings of word recognition stimuli, yet audiologists continue to use MLV presentation despite the evidence that recorded presentation is much more accurate. The present study is investigating the time difference between MLV and computer-assisted recorded materials presented directly from the audiometer. This work will improve audiology practices by negating the argument that it takes additional clinical time to complete word recognition testing using recorded materials and encourage audiologists to more closely follow best practices by collecting more meaningful and accurate information about their patients’ test results.

Recent Projects

- Listening Effort and Speech Perception Performance Using Different Facemasks

- Validation of Spanish Speech Recognition Tests

- SPAT: Speech Perception Assessment with Transducers

- Validation of a Clinical Electrode Discrimination Task for Postlingually Deafened Adult Cochlear Implant Users

- Speech Perception Performance in Ecological Noise

- Bilingualism and its effects on speech perception in noise

- Objective and subjective assessment of perceived mild-to-moderate hearing loss

- Speech Perception Performance in Noise for Bilingual and Monolingual Children

- Auditory Outcomes in Patients Who Received Proton Radiotherapy for Craniopharyngioma

- Corpus of Deaf Speech for Acoustic and Speech Production Research

- Algorithms for Unsupervised and Online Learning of Hierarchy of Features for Tuning Cochlear Implants for the Hearing-Impaired

- Speech Understanding Using Surgical Masks

- Confidence Intervals for the Maryland CNC Test

- A Study of Recorded versus Live Voice Word Recognition

- Bilingualism and Its Effects on Speech Perception in Noise

- Speech Perception in Noise for Bilingual Listeners with Normal Hearing

- Subjective and Objective Assessment of Hearing Aid Outcomes

- Speech Intelligibility and Hearing Function in Navy Divers

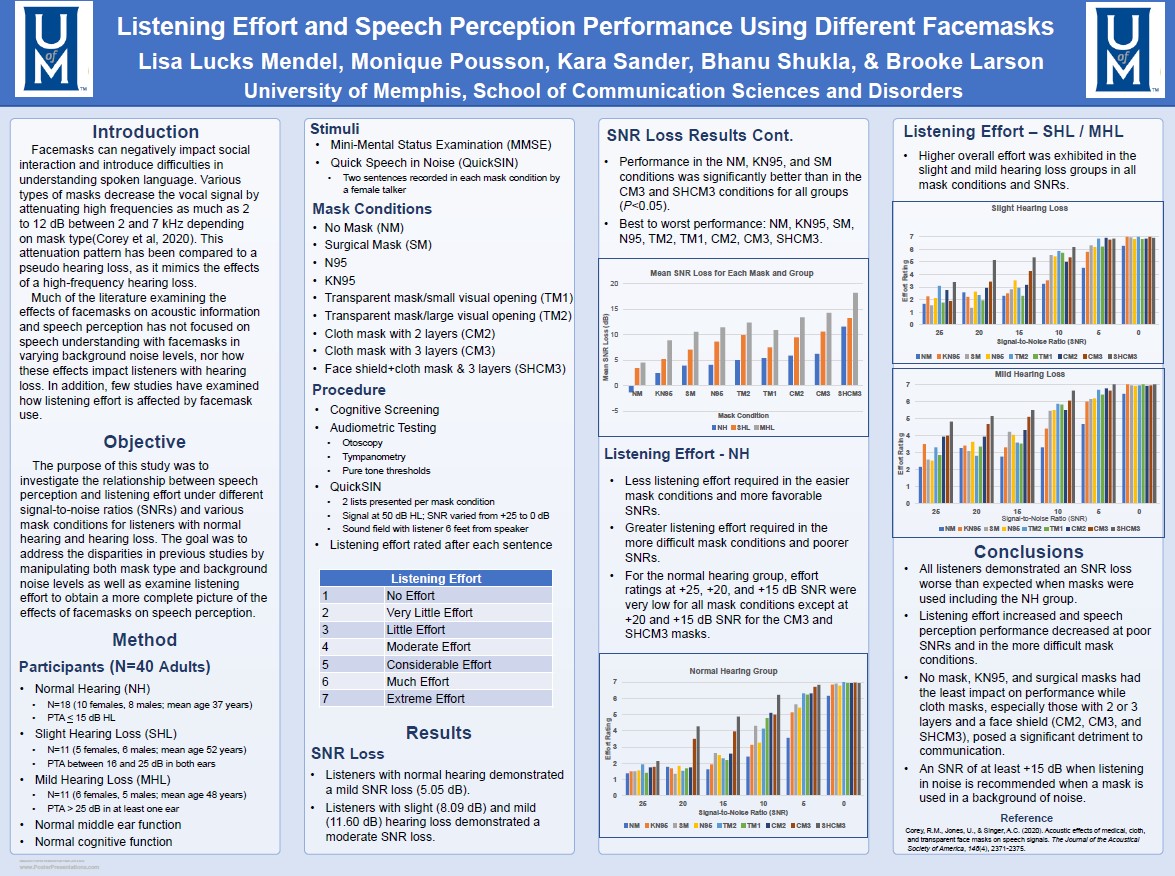

Listening Effort and Speech Perception Performance Using Different Facemasks

During the COVID-19 pandemic mask mandates and recommendations became common place for home and work life. Some types of masks made speech understanding especially difficult. This research assessed mask effects on speech perception and listening effort for listeners with normal hearing and hearing loss. Sentences from the Quick Speech-in-Noise test were presented using eight different mask types with varied background noise levels. Results indicated cloth masks with numerous layers as well as the addition of a face shield created the most difficulty even for listeners with normal hearing. The N95 mask, considered the best mask for protection, also created difficulty especially for listeners with hearing loss. If communication is to occur in a background of noise while wearing masks, a KN95 mask and signal-to-noise ratio of at least +15 dB is recommended regardless of hearing status.

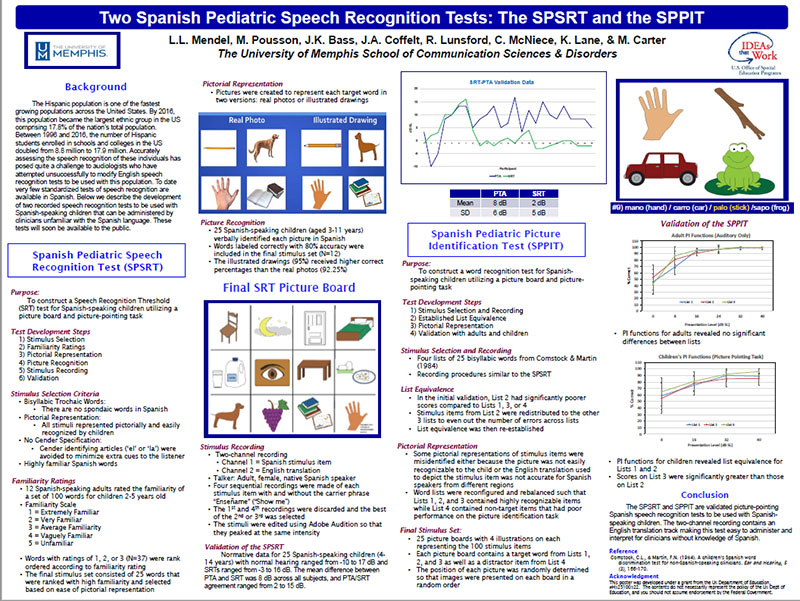

Validation of Spanish Speech Recognition Tests

In this series of studies, we have developed the Spanish Pediatric Speech Recognition Threshold (SPSRT) test and the Spanish Pediatric Picture Identification Test (SPPIT). Both of these tests are now available from Auditec, Inc.. Please click on the link below if you are interested in purchasing these tests.

- Spanish Pediatric Speech Recognition Threshold (SPSRT)

- Spanish Pediatric Picture Identification Test (SPPIT)

Click on poster for readable version.

The SPAT: Speech Perception Assessment with Transducers

Speech audiometry is a clinical tool designed to assess functional hearing performance. Speech perception tests provide a measure of how well listeners understand speech in a controlled environment (Lucks Mendel & Danhauer 1997), and results from speech perception tests are used to fit amplification devices and plan rehabilitation.

The purpose of this study is to examine the effects of ear specificity and transducer type on speech perception performance using monosyllabic words in quiet and noise.

Validation of a Clinical Electrode Discrimination Task for Postlingually Deafened Adult Cochlear Implant Users

Recent research has suggested that selectively deactivating electrodes can improve speech perception abilities for cochlear implant users. The development of a reliable and valid electrode discrimination task could help clinicians identify poorly performing electrodes more quickly and easily compared to current methods. The goal of this study is to determine test-retest reliability for an electrode discrimination task in an effort to design a clinically applicable test for electrode deactivation using a pitch perception task.

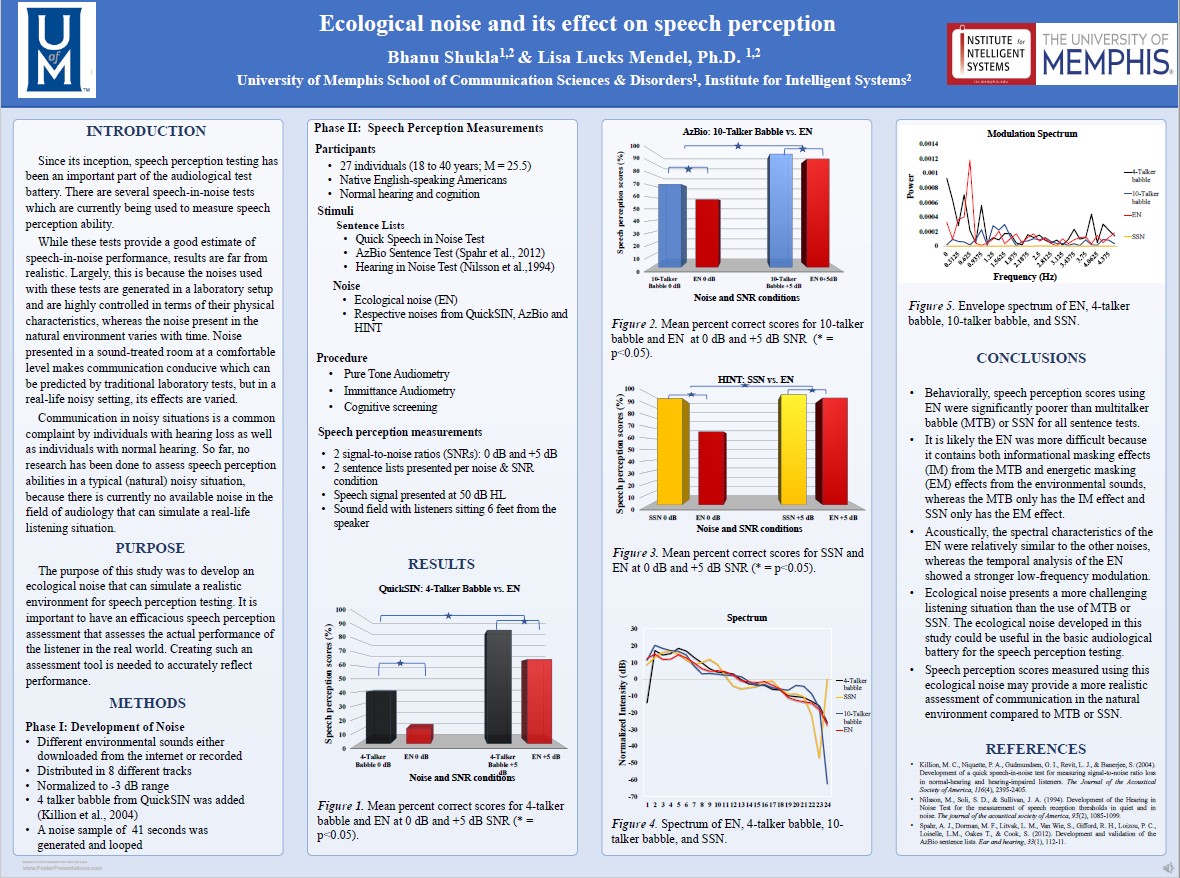

Speech Perception Performance in Ecological Noise

Research has suggested that there would be benefit to measuring speech perception in a realistic scenario. Currently there is no noise stimulus used in the audiological test battery that simulates a real-world environment. This study focuses on developing and validating an ecological noise that consists of different environmental sounds such as birds chirping, leaves rustling, and traffic noise for clinical use for speech perception testing in the routine audiological test battery.

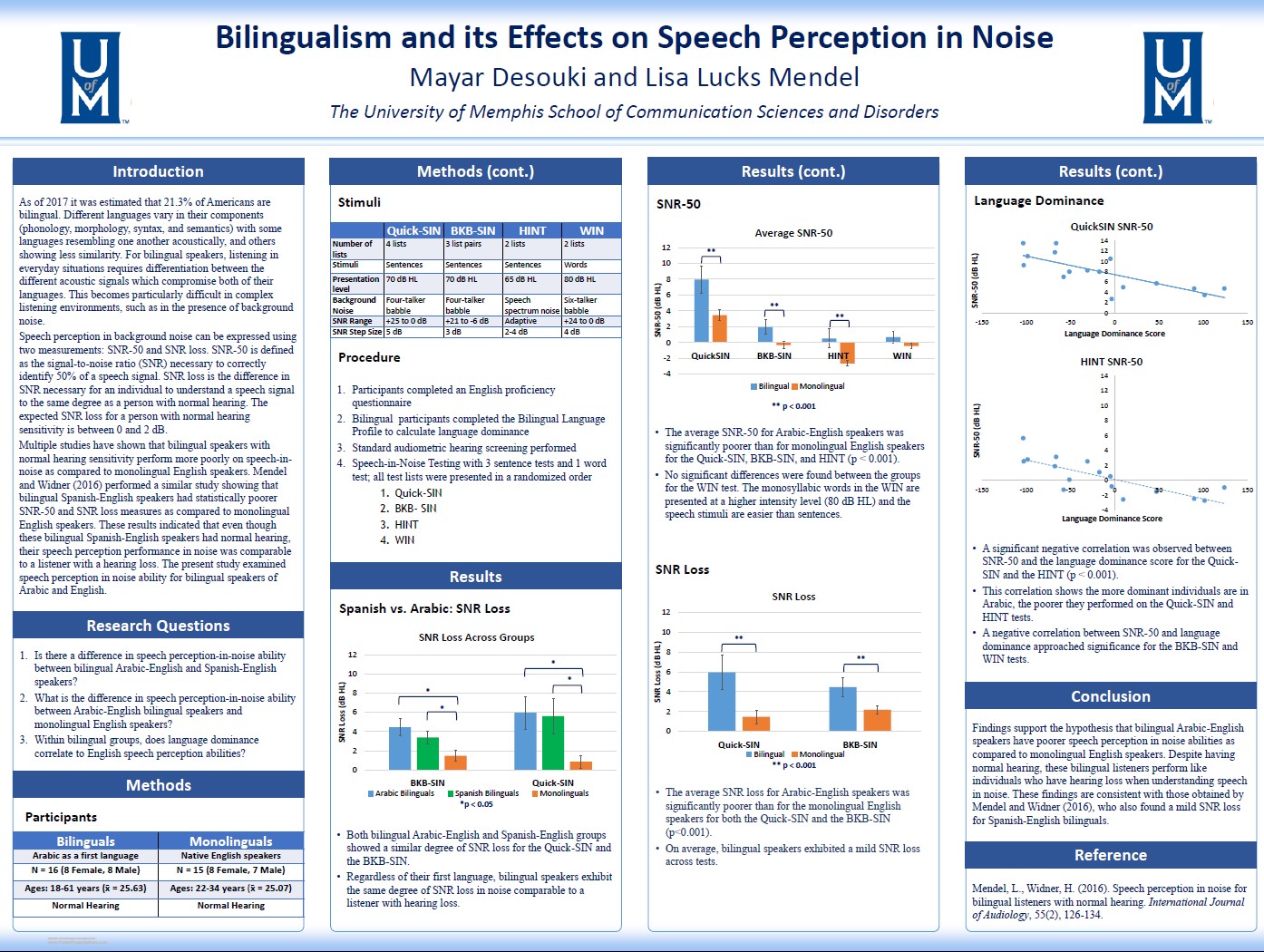

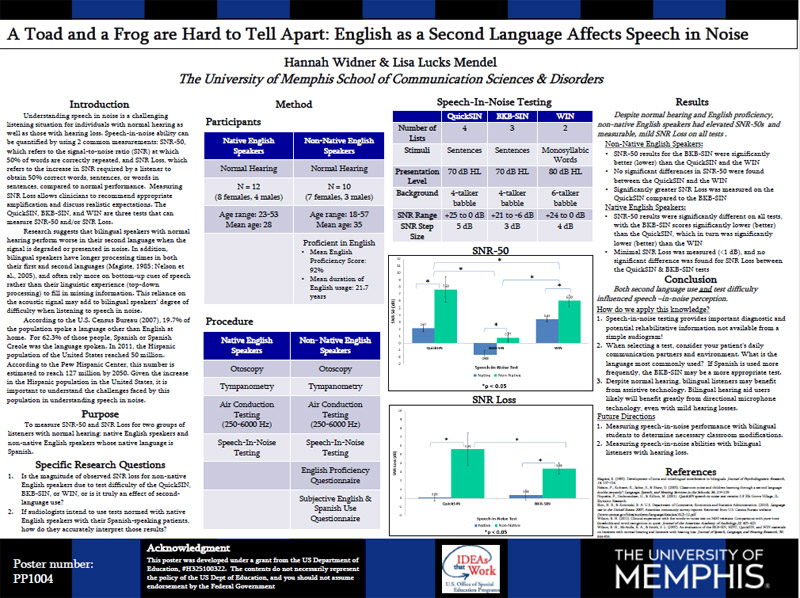

Bilingualism and its effects on speech perception in noise

The primary purpose of this study was to extend upon the growing body of research regarding speech perception abilities in bilingual populations. Mendel and Widner (2016) performed a study showing that bilingual Spanish-English speakers had statistically poorer speech perception abilities in background noise when compared to monolingual English speakers. This study examined speech perception in noise for bilingual speakers of Arabic and English, as compared to their monolingual English-speaking counterparts. The secondary purpose of the study was to examine the similarities in speech perception abilities between bilingual Arabic and Spanish speakers under similar testing conditions.

Results indicated a significant negative correlation between SNR-50 and the language dominance score for the QuickSIN and the HINT (p < 0.001). This correlation shows that the more dominant individuals are in Arabic, the poorer they performed on the QuickSIN and HINT tests. A negative correlation between SNR-50 and language dominance approached significance for the BKB-SIN and WIN tests. It was concluded that the bilingual Arabic-English speakers have poorer speech perception in noise abilities as compared to monolingual English speakers. Despite having normal hearing, these bilingual listeners perform like individuals who have hearing loss when understanding speech in noise. These findings are consistent with those obtained by Mendel and Widner(2016), who also found a mild SNR loss for Spanish-English bilinguals.

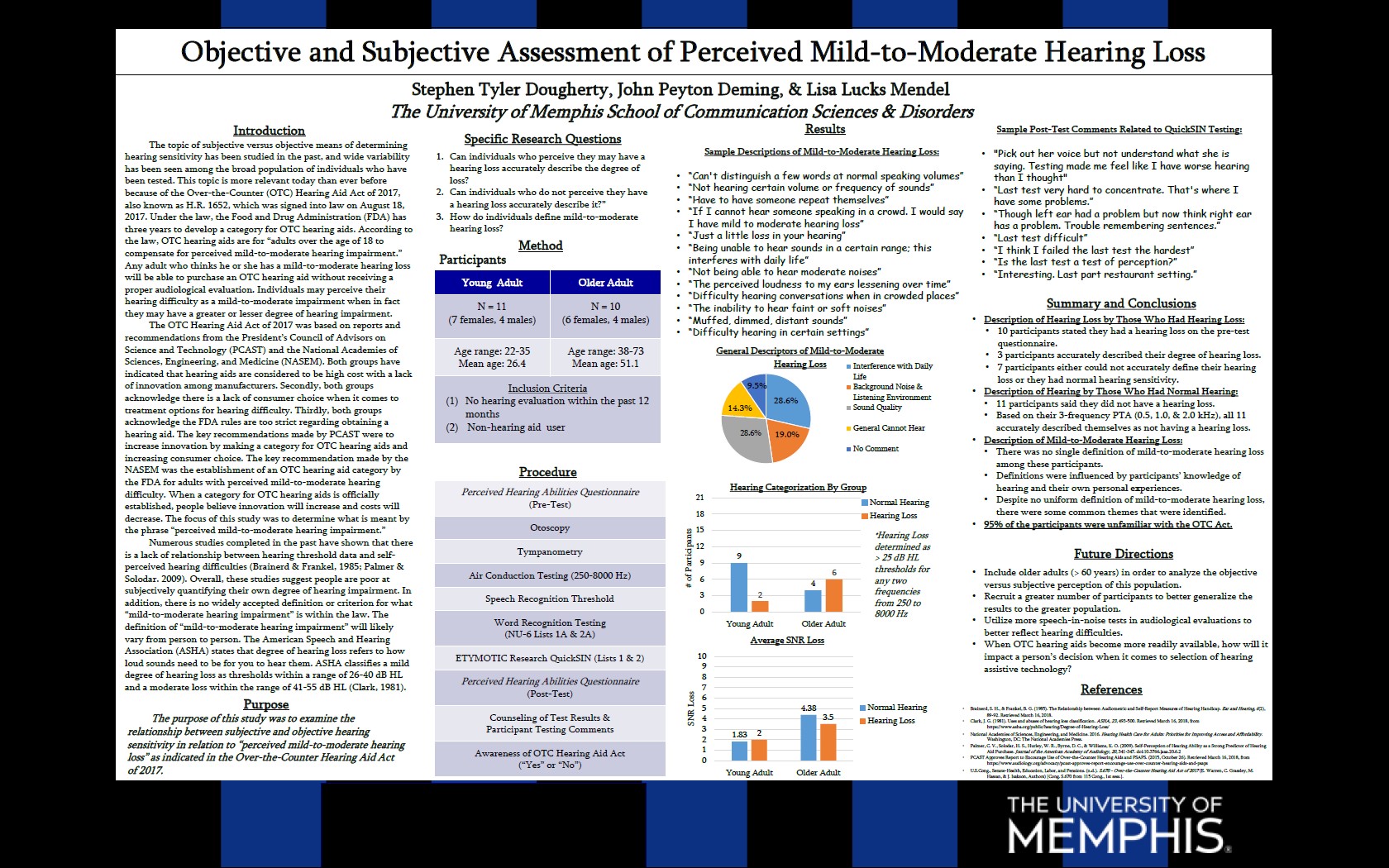

Objective and subjective assessment of perceived mild-to-moderate hearing loss

The Over the Counter (OTC) Hearing Aid Act of 2017 was based on reports and recommendations

from the President's Council of Advisors on Science and Technology (PCAST) and the

National Academies of Sciences, Engineering, and Medicine (NASEM). The key recommendations

made by the PCAST were to increase innovation by making a category for OTC hearing

aids and increase consumer choice. The key recommendation made by the NASEM was the

establishment of an OTC hearing aid category by the FDA for adults with perceived

mild-to-moderate hearing difficulty. When a category for OTC hearing aids is officially

established, people believe innovation will increase, and costs will decrease. The

focus of this study was to determine what is meant by the phrase "perceived mild-to-moderate

hard of hearing."

Results indicated that there was no single definition of mild-to-moderate hearing

loss among the participants and definitions were influenced by participants' knowledge

of hearing and their own personal experiences.

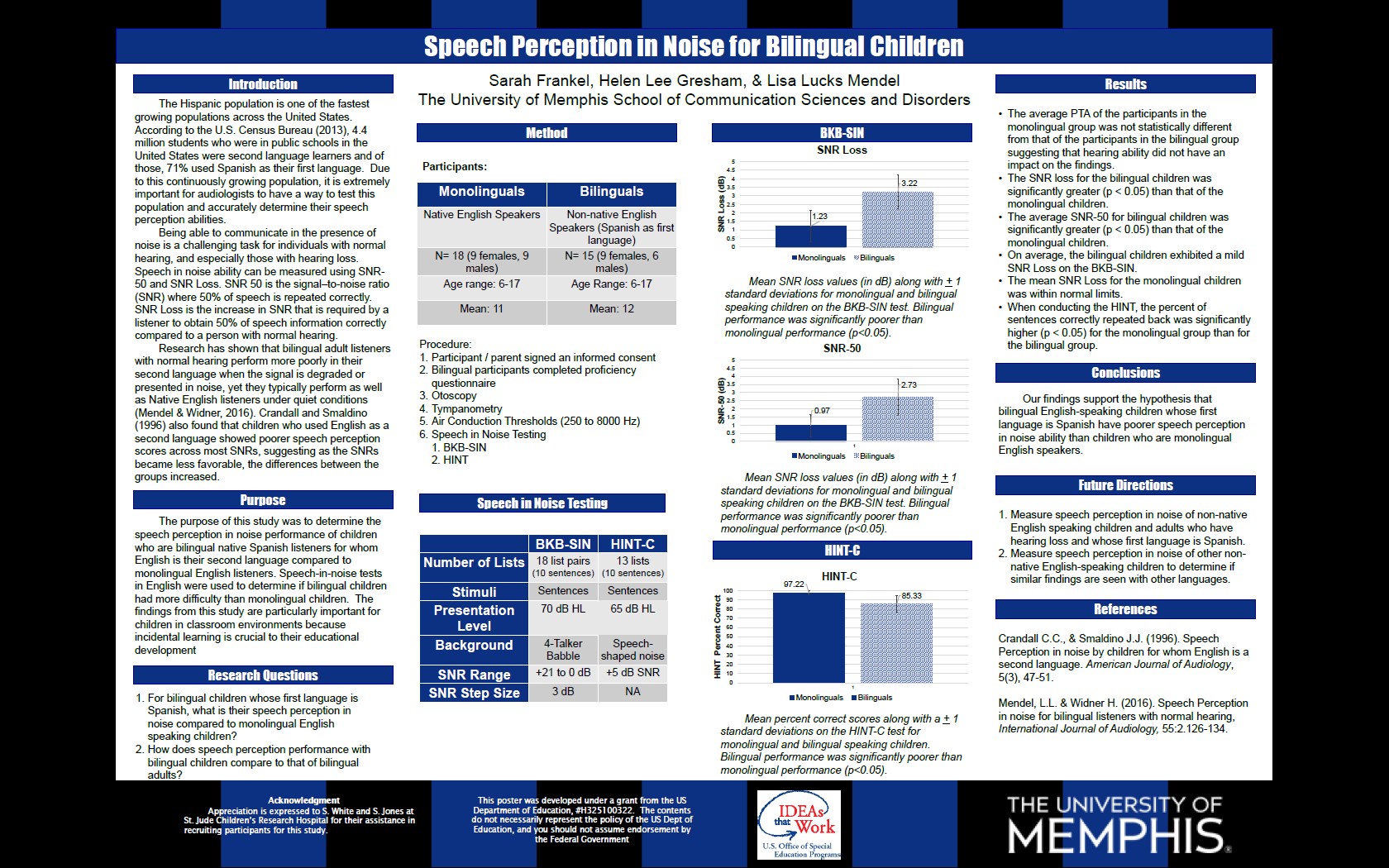

Speech Perception Performance in Noise for Bilingual and Monolingual Children

The Hispanic population is one of the fastest growing populations across the United States. Research has shown that bilingual, normal hearing listeners perform more poorly in their second language when the signal is degraded or presented in noise, yet, they typically perform as well as native English listeners under quiet conditions. This study was designed to determine the speech-in-noise performance of bilingual children (native Spanish speakers for whom English is a second language (ESL)) compared to monolingual children (native English speakers). Speech-in-noise tests in English were used to determine if bilingual children had more difficulty than monolingual children.

The findings of this study showed that bilingual English-speaking children whose first language is Spanish have poorer speech perception in noise ability than children who are monolingual English speakers. SNR loss for bilingual children was significantly greater (p < 0.05) than that of the monolingual children. On average, the bilingual children exhibited a mild SNR Loss on the BKB-SIN and the percent of sentences correctly repeated back was significantly higher (p < 0.05) for the monolingual group than for the bilingual group when conducting the HINT. Also the average SNR-50 for bilingual children was significantly greater (p < 0.05) than that of the monolingual children.

These findings are consistent with how adults perform and are particularly important for children in classroom environments because incidental learning is crucial to their educational development.

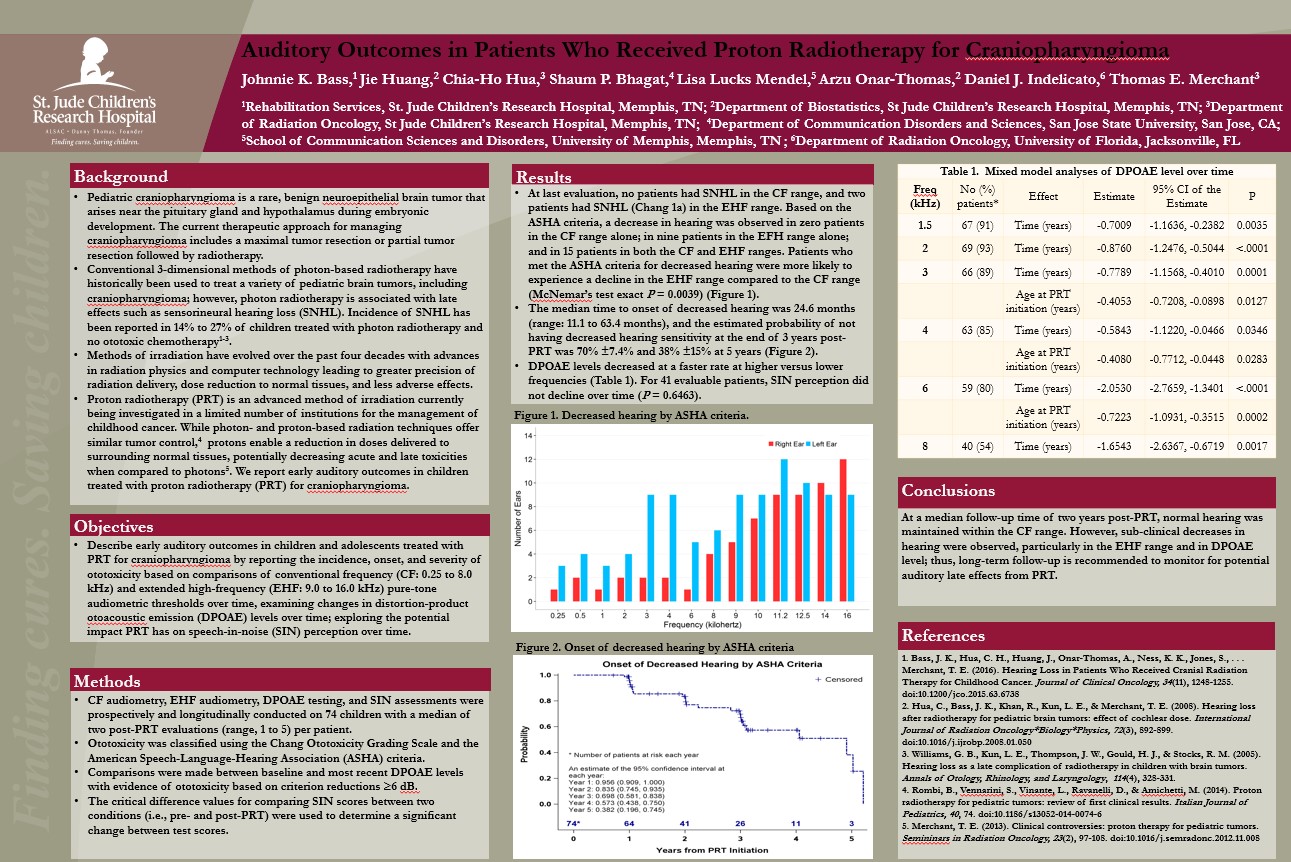

Auditory Outcomes in Patients Who Received Proton Radiotherapy for Craniopharyngioma

Pediatric craniopharyngioma is a rare, benign neuroepithelial brain tumor that arises

near the pituitary gland and hypothalamus during embryonic development. The current

therapeutic approach for managing craniopharyngioma includes a maximal tumor resection

or partial tumor resection followed by radiotherapy. Incidence of SNHL has been reported

in 14% to 27% of children treated with photon radiotherapy and no ototoxic chemotherapy.

Proton radiotherapy (PRT) is an advanced method of irradiation currently being investigated

in a limited number of institutions for the management of childhood cancer.

In this study early auditory outcomes in children and adolescents treated with PRT for craniopharyngioma are determined by reporting the incidence, onset, and severity of ototoxicity based on comparisons of conventional frequency (CF: 0.25 to 8.0 kHz) and extended high-frequency (EHF: 9.0 to 16.0 kHz) pure-tone audiometric thresholds over time, examining changes in distortion-product otoacoustic emission (DPOAE) levels over time; exploring the potential impact PRT has on speech-in-noise (SIN) perception over time.

At a median follow-up time of two years post-PRT, normal hearing was maintained within the CF range. However, sub-clinical decreases in hearing were observed, particularly in the EHF range and in DPOAE level; thus, long-term follow-up is recommended to monitor for potential auditory late effects from PRT.

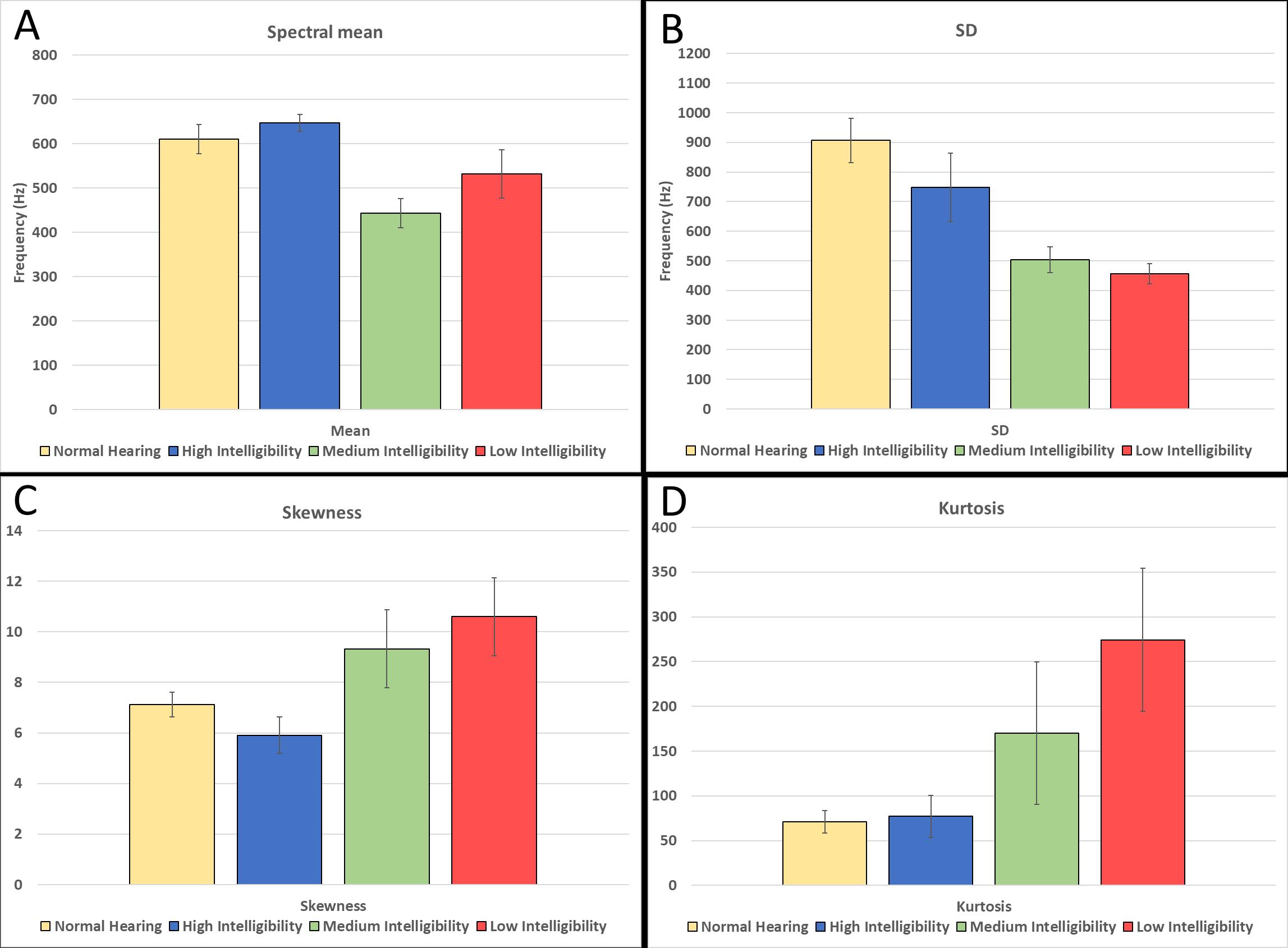

Corpus of deaf speech for acoustic and speech production research

In this project a corpus of recordings of deaf speech is introduced. Adults who were pre- or post-lingually deafened as well as those with normal hearing read standardized speech passages totaling 11 hours of .wav recordings. Preliminary acoustic analyses are included to provide a glimpse of the kinds of analyses that can be conducted with this corpus of recordings. Long term average speech spectra as well as spectral moment analyses provide considerable insight into differences observed in the speech of talkers judged to have low, medium, or high speech intelligibility (Mendel, et al 2017). If you are interested in obtaining access to the corpus of deaf speech recordings, please email Dr. Lisa Lucks Mendel or Monique Pousson

Fig. 3. Four spectral moments for the NH and HI groups (H, M, and L) for all five passages combined. (A) Average spectral mean; (B) SD; (C) skewness; and (D) kurtosis. Error bars represent 61 standard deviation.

Algorithms for Unsupervised and Online Learning of Hierarchy of Features for Tuning Cochlear Implants for the Hearing Impaired

In this project we are developing machine learning algorithms to tune hearing instruments, particularly cochlear implants, based on each individual's hearing characteristics and speech production errors. The speech production capabilities of individuals with severe to profound sensorineural hearing loss are being analyzed with the assumption that deficiencies in their speech production output are a reflection of their poor speech perception capabilities. The speech production analysis and the algorithms will help to determine modifications that can be made to hearing instruments to improve speech perception. Ongoing samples of normal hearing and hearing-impaired speech will be analyzed to document the speech characteristics and deficiencies from these two populations. The missing and distorted features from the hearing-impaired speech are being identified, and algorithms are being developed that will ultimately be used to improve the signal processing strategies used in hearing instruments to enhance the audibility of speech features for hearing-impaired individuals.

This award is through the Smart Health and Wellbeing (SHB) Program of NSF that seeks to address fundamental technical and scientific issues that support transformation of healthcare from reactive and hospital-centered to preventive, proactive, evidence-based, person-centered and focused on wellbeing rather than disease.

The NSF Project webpage can be found here.

Speech Understanding Using Surgical Masks

In this project we evaluated whether surgical masks have an effect on speech understanding in listeners with normal hearing and hard of hearing. In Phase One of this project, speech perception was assessed for individuals with normal hearing and hearing loss using a traditional paper surgical mask with speech stimuli administered in the presence and absence of dental office noise (Mendel, L.L, Gardino, J.A., & Atcherson, S.R., 2008).

A total of 31 adults participated in the first study (1 talker, 15 listeners with normal hearing, and 15 with hard of hearing). The normal hearing group had thresholds of 25 dB HL or better at the octave frequencies from 250 through 8000 Hz while the hearing loss group had varying degrees and configurations of hearing loss with thresholds equal to or poorer than 25 dB HL for the same octave frequencies.

Selected lists from the Connected Speech Test (CST) were digitally recorded with and without a surgical mask present and then presented to the listeners in four conditions: without a mask in quiet, without a mask in noise, with a mask in quiet, and with a mask in noise. A significant difference was found in the spectral analyses of the speech stimuli with and without the mask. The presence of a surgical mask, however, did not have a detrimental effect on speech understanding in either the normal-hearing or hearing-impaired groups. The dental office noise did have a significant effect on speech understanding for both groups. These findings suggest that the presence of a surgical mask did not negatively affect speech understanding. However, the presence of noise did have a deleterious effect on speech perception and warrants further attention in health-care environments.

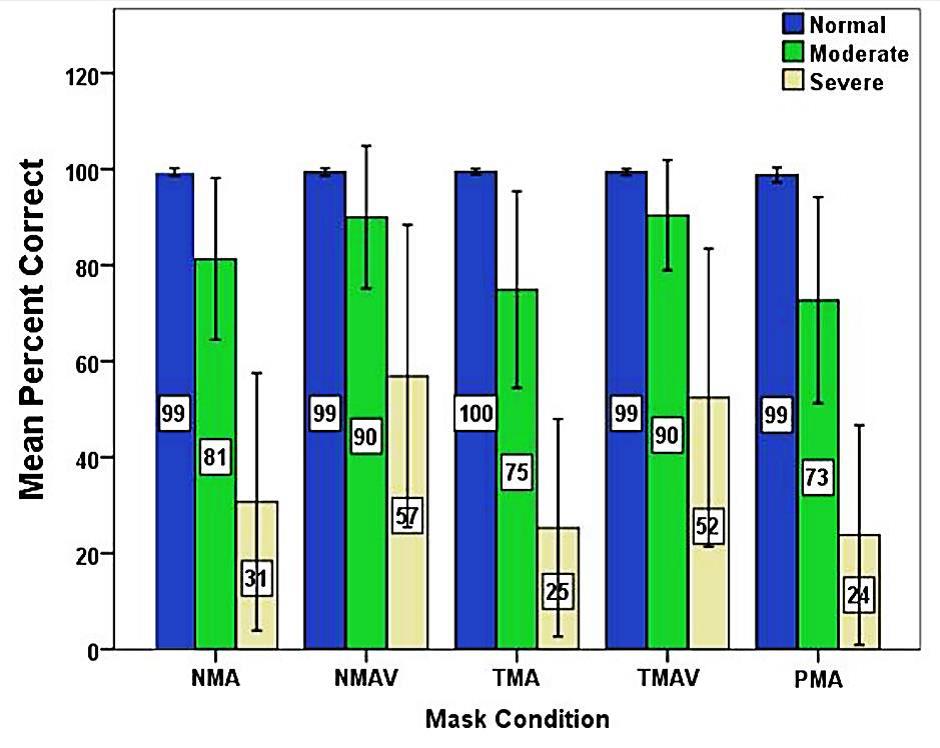

Phase Two of this study focused on assessing the effect of three masks (a traditional paper mask and two different masks that allow some visual cues) with three groups of listeners: normal hearing, moderately hard of hearing, and severe-to-profoundly hard of hearing (Atcherson, et al., 2016).

A total of 31 adults participated in the study: 1 talker, 10 listeners with normal hearing, 10 listeners with moderate sensorineural hearing loss, and 10 listeners with severe-to-profound hearing loss. A significant difference was found in the spectral analyses of the speech stimuli with and without the masks; however, no more than ~2 dB (RMS). Listeners with normal hearing performed consistently well across all conditions. Both groups of listeners with hard of hearing benefitted from visual input from the transparent mask. The magnitude of improvement in speech perception in noise was greatest for the severe-to-profound group. Findings confirm improved speech perception performance in noise in listeners with hard of hearing when visual input is provided using a transparent surgical mask. Most importantly, the use of the transparent mask did not negatively affect speech perception performance in noise.

Figure 1. Mean percent correct performance on the Connected Speech Test following arcsine transformation for listeners with normal (blue), moderate SNHL (green), and severe SNHL (yellow) in the following conditions: no mask audio-only (NMA), no mask audio-visual (NMAV), transparent mask audio-only (TMA), transparent mask audio-visual (TMAV), and paper mask audio-only (PMA).

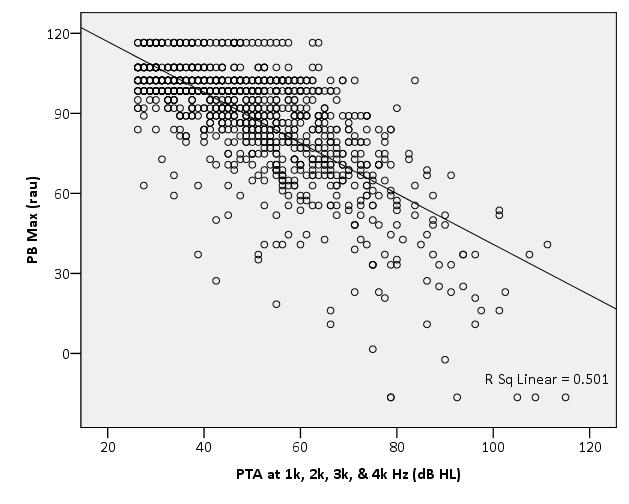

Confidence Intervals for the Maryland CNC Test

In this retrospective study, records of veterans who had audiological compensation and pension examinations (hearing evaluations) at the Veterans Administration Medical Center (VAMC) in Jackson, Mississippi between 1992 and 2001 were reviewed. Audiologists are often called upon to decide whether a given word recognition score is in line with what is expected from a patient with a given degree of hearing loss. Comparison of actual scores with expected or predicted scores has diagnostic and rehabilitative implications as well as information for judging the validity of the obtained score and the accompanying pure tone thresholds. However, there is currently no objective and quantitative methodology in widespread use for evaluating word recognition scores. The purpose of this study was to establish an objective method to assist the audiologist in assessing the word recognition score obtained as part of a hearing evaluation. Over 2000 clinical records from the VAMC were reviewed and confidence limits were established for representative scores

Scatterplot of PB Max (rau) scores as a function of pure tone average at 1000, 2000, 3000, and 4000 Hz for all ears with hearing loss.

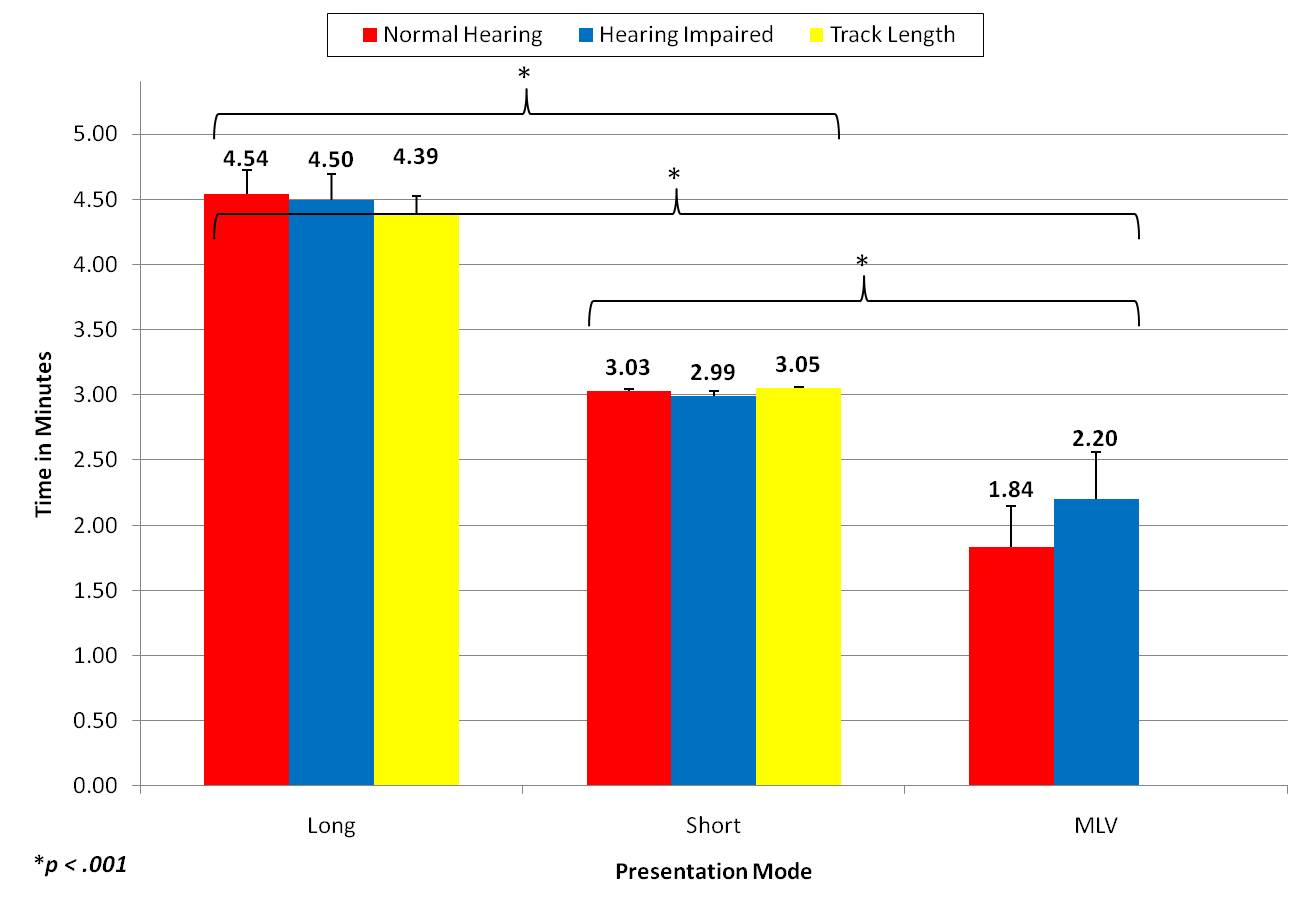

A Study of Recorded versus Live Voice Word Recognition

In this study, we examined administration times for monitored-live-voice (MLV) versus recorded presentation of NU-6 word lists for listeners with normal hearing and hearing loss. This study documented that test administration time for MLV presentation of monosyllabic word lists was significantly shorter than that for recorded presentations of the same stimuli for listeners with normal hearing and hard of hearing. However, this difference was just over one minute for listeners with normal hearing (1 min, 9 sec) and just under one minute for listeners with hearing loss (49 sec). The listeners with hearing loss took longer to respond to the stimuli than the listeners with normal hearing which reduced the difference in administration time between MLV and recorded lists for this population. Given that the majority of patients audiologists test have hearing loss, the average difference in test administration time between MLV and recorded presentation was less than one minute. Thus, although this is a statistically significant difference, it is our belief that this difference is not clinically significant. That is, given these findings, we suggest that clinicians should be willing to sacrifice less than one minute of time per word list for greater reliability of the results.

Portions of this study were presented at the American Speech-Language-Hearing Association (ASHA) Annual Convention in November, 2010 and at AudiologyNOW! in April, 2011. This manuscript was published in The International Journal of Audiology in 2011.

Administration time in minutes across the three presentation conditions and the two groups. CD track lengths are also plotted for the long and short ISIs (interstimulus intervals).

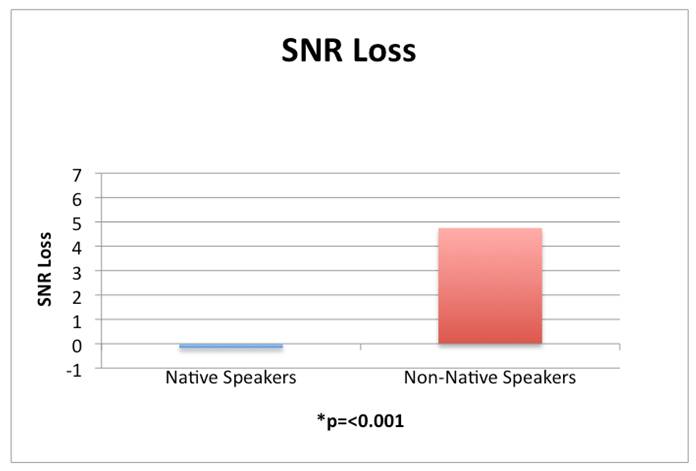

Bilingualism and Its Effects on Speech Perception in Noise

In Phase I of this study, signal-to-noise ratio loss was measured in two groups of normal hearing participants: (a) those with English as their native language and (b) those with English as a second language (i.e. Asian languages). Results indicated that participants for whom English is a second language perform significantly worse than native English speakers in a background of multi-talker babble. This difficulty in speech perception in noise reflects poor processing of English stimuli in a background of noise, making these individuals with normal hearing function as though they are hard of hearing. Phase II of this study compared similar groups of subjects except that the non-native speakers were all Hispanic.

Non-native English speakers had significantly poorer SNR losses compared to their English speaking counterparts.

Speech Perception in Noise for Bilingual Listeners with Normal Hearing

Phase II of this study compared similar groups of subjects except that the non-native speakers were all Hispanic. Results indicated that bilingual Spanish listeners with normal hearing who are proficient in English performed significantly poorer in noise when compared to their monolingual English speaking controls. This decreased performance in noise requires an improved SNR for this population to reach a comparable level of comprehension to their monolingual English speaking counterparts. It is recommended that speech-in-noise tests be used with bilingual patients as part of the audiometric test battery to provide additional insight into their speech perception capabilities.

Click on poster for readable version.

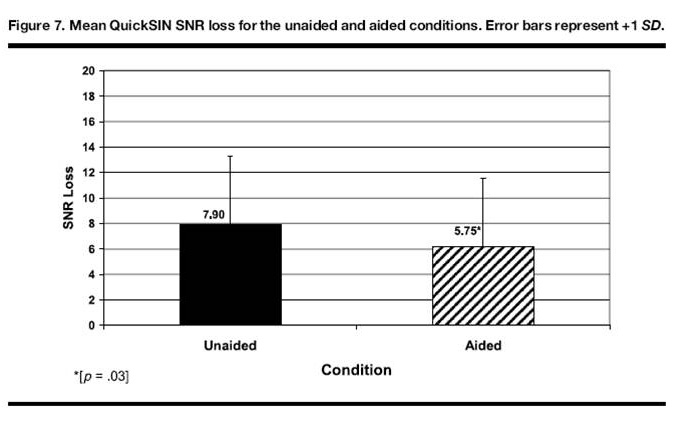

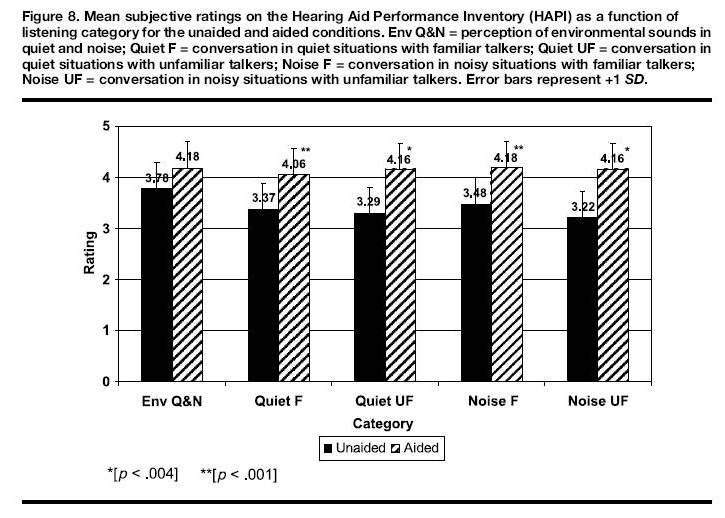

Subjective and Objective Assessment of Hearing Aid Outcomes

Procedures currently used for evaluating hearing aids have fallen short of the goal

of accurately assessing a listener's speech perception capabilities. Neither verification

methods that determine whether hearing aids provide adequate gain according to prescriptive

techniques nor validation methods that summarize patients' subjective perceptions

of hearing aid benefit are sufficient by themselves. In this project, selected objective

and subjective outcome measures are being evaluated that have the best likelihood

of providing the desired information about hearing aid benefit. Newer speech recognition

tests that were developed with appropriate standardization are being evaluated along

with subjective measures of self report that have been rigorously tested and validated

for measuring hearing aid benefit. The effectiveness of both these objective and subjective

outcome measures will then be evaluated and compared to determine their accuracy in

documenting speech perception capabilities. Based on pilot data (Mendel, 2007) it

is anticipated that at least some of the newly developed speech recognition materials

will be sensitive enough to demonstrate objective hearing aid benefit and that their

results will correlate well with patients' subjective perceptions of that benefit

on specific self-report measures. This project will verify that if both objective

and subjective assessments are truly valid, then both types of outcomes will provide

similar results.

The results of this project will more clearly define the relationship between objective

and subjective outcome measures in an attempt to better define true hearing aid benefit.

Thus, the long-term objective of this project is to be able to make recommendations

to clinicians regarding the inclusion of appropriate speech recognition tests and

self-report measures as an integral part of the hearing aid evaluation process. The

results of this study will not only make the clinician's job easier, but they will

also provide the needed evidence that such outcomes are valid regarding assessment

of subjective and objective speech perception performance with hearing aids.

From Mendel, L.L. (2007). Objective and Subjective Hearing Aid Assessment Outcomes, American Journal of Audiology, 16, 118-129.

Speech Intelligibility and Hearing Function in Navy Divers

Previously, Dr. Lucks Mendel served as Associate Director for the Center for Speech and Hearing Research in the National Center for Physical Acoustics at the University of Mississippi. During that time, she received over $500,000 of external funding to conduct research with Navy divers at the Navy Experimental Diving Unit in Panama City, Florida. These research projects focused primarily on studying changes in hearing physiology that occurred when Navy divers were at depth. In addition, studies were conducted that focused on developing ways to improve speech intelligibility and speech perception among divers who work in noisy environments under adverse conditions. Because Navy divers work at such deep depths and must breathe helium, the quality and intelligibility of their speech is affected. A related project focused on analyzing the acoustic characteristics of the helium speech that was produced. The effects of helium and pressure changes on speech production and perception were studied in order to make improvements in the communication systems used by these divers.