2019 - December 5: We are draining gpuq nodes to update the CUDA driver. We are also

draining nodes c075, c076, c077, and c078 to be placed into a shortq partition. This

partition will have a 2 day time-limit and each job will be limited to single nodes.

2019 - November 4: Thank you for attending today's meeting. If you wanted to attend

but didn't make it, we will be having the same meeting on November 7th in Administration

building room 191 at 11:00 AM.

2019 - October 23: We will be having our semester meeting in room 191 of the administration

building on Monday, November 4th at 11:00 AM. If unable to attend the Monday time,

we will also be available to meet in the same time and place on Thursday, November

7th.

2019 - August 1: We have implemented x2go remote desktop with XFCE for cluster login

in addition to the normal ssh login. Download x2go-client from here.

2019 - May 13-18 : We will be modifying SLURM's job scheduling. There will be no downtime.

2019 - May 13 : We will be decreasing the default memory per CPU from 2 gigabytes

down to 1 gigabyte.

2019 - April - 24 : Access to old storage from first penguin cluster, specifically

hpcxfer.memphis.edu:/oldoldhome, will be decommissioned, but access to previous cluster storage, hpcxfer.memphis.edu:/oldhome and hpclogin.memphis.edu:/oldhome, will remain. Additionally, there is an HPC meeting at 11AM on Wednesday the 24th

in FIT 203/205 for which discussion of HPC resources and policies is a part.

2019 - January - 30 : Old storage from both previous (2015-2018) and first (2012-2015)

penguin cluster is available by ssh on hpcxfer.memphis.edu. Just use your campus user name and password like you do on the current cluster.

Archive storage is available for those who are interested.

2019 - January - 3 : Cluster scheduler reconfigured.

2018 - September - 25 : The new cluster should be in place by the end of October 2018.

The current cluster will be shut down around the middle of October (exact date to

be determined) and will be removed by the end of October See new cluster details at

2018 HPC upgrade.

We are draining the gpuq node partitions and updating the CUDA driver. Additionally,

this will provide updates for the cuda toolkit, blas, and fft modules (version 10.0

and 10.1). Once a node is drained, the driver is updated, and the node rebooted, it

will be un-drained. This shouldn't affect any jobs, but jobs may show 'Resources'

while in 'PENDING' status.

We are also draining nodes c075, c076, c077, and c078 to place in a new partition

called shortq. You can use this partition by simply changing the sbatch partition

option to '--partition shortq'. This partition will be limited to 2 day jobs and single

nodes (aka shared memory). MPI will work on these nodes, but the single node rule

will limit your execution to one node per job. We will not extend time-limits on these

nodes.

Thank you for your attention.

Dear all,

We would like to invite you to a meeting to address an overview of the current HPC

scheduling parameters, restrictions, and how to leverage of these resources for your

research. The meeting will be held on Monday, November 4th from 11:00 to 12:00 AM

in the administration building (room 191). If you are unable to make it, we will be

available on Thursday, November 7th at the same time and place.

Topics covered:

- Cluster utilization/throughput of jobs

- Metrics such as wait time, job sizes and job times

- Current job scheduling parameters and potential improvements

- Common job scheduling problems and solutions

- Storage

- Current utilization/growth of cluster storage

- User home directories versus user scratch directories and backup

- Archive storage

- Checkpointing with DMTCP (Dynamic MultiThreaded CheckPointing) to prevent job loss

Your input is highly appreciated and will help us to improve our ability to serve

you.

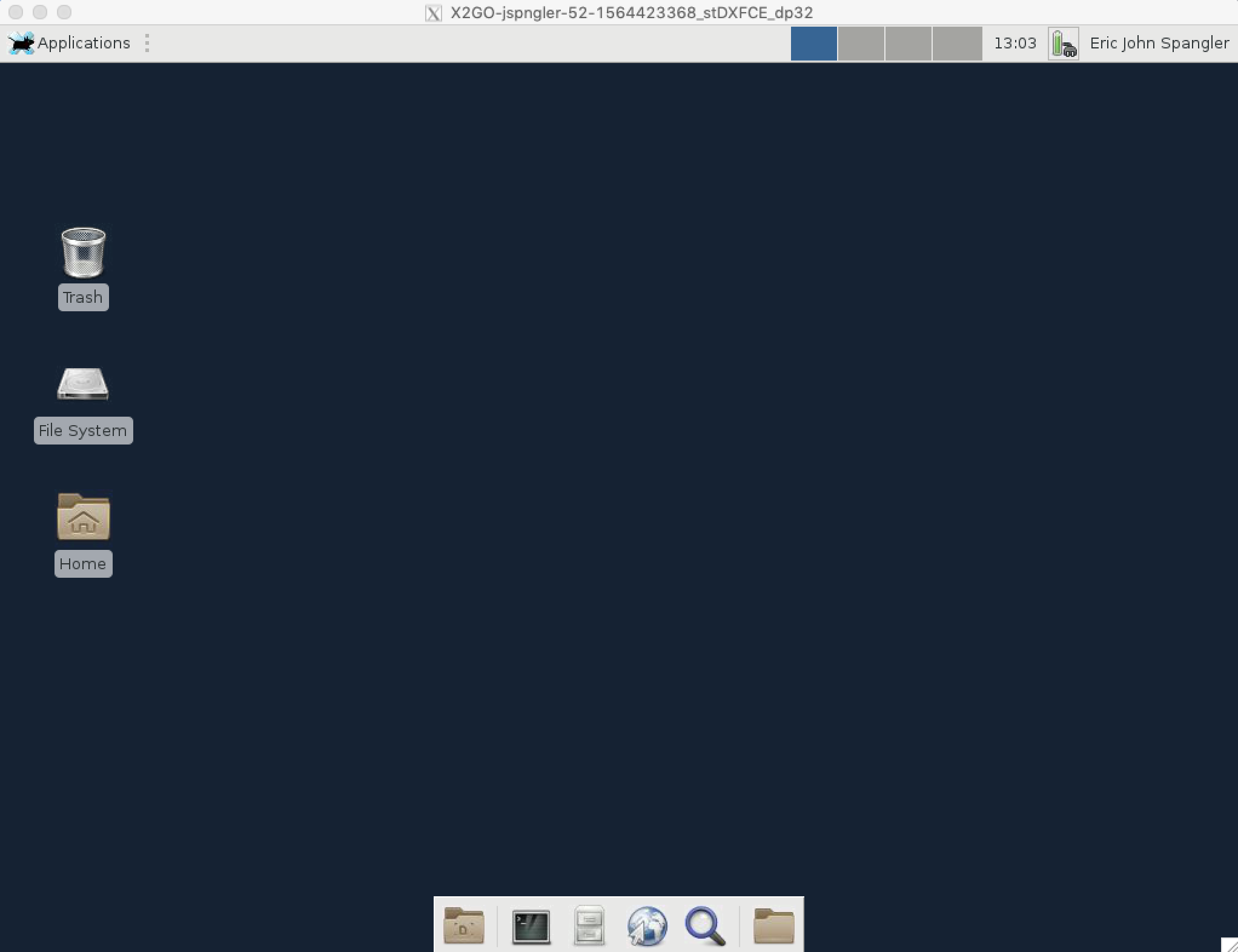

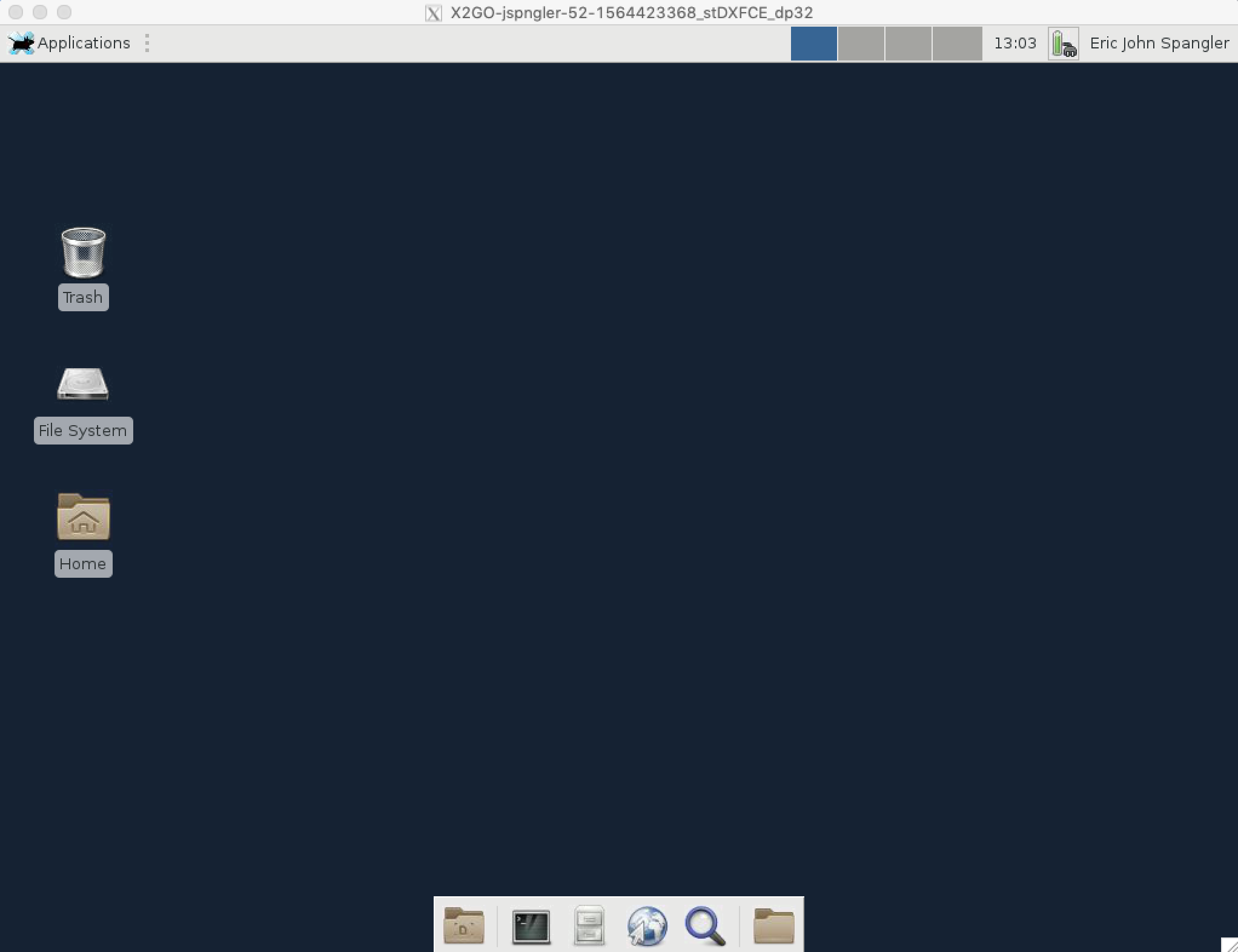

We have a new way to connect to the cluster using x2go. Utilizing the x2go-client,

you can connect to the cluster with an XFCE desktop! From this desktop, you can run

terminal, browse your home directories, submit jobs, view data, etc... all within

the click of your mouse! It is also much faster and less error prone than connecting

with ssh X forwarding.

To connect (on campus, off campus you must first connect to through campus VPN):

- Download and install the x2go client.

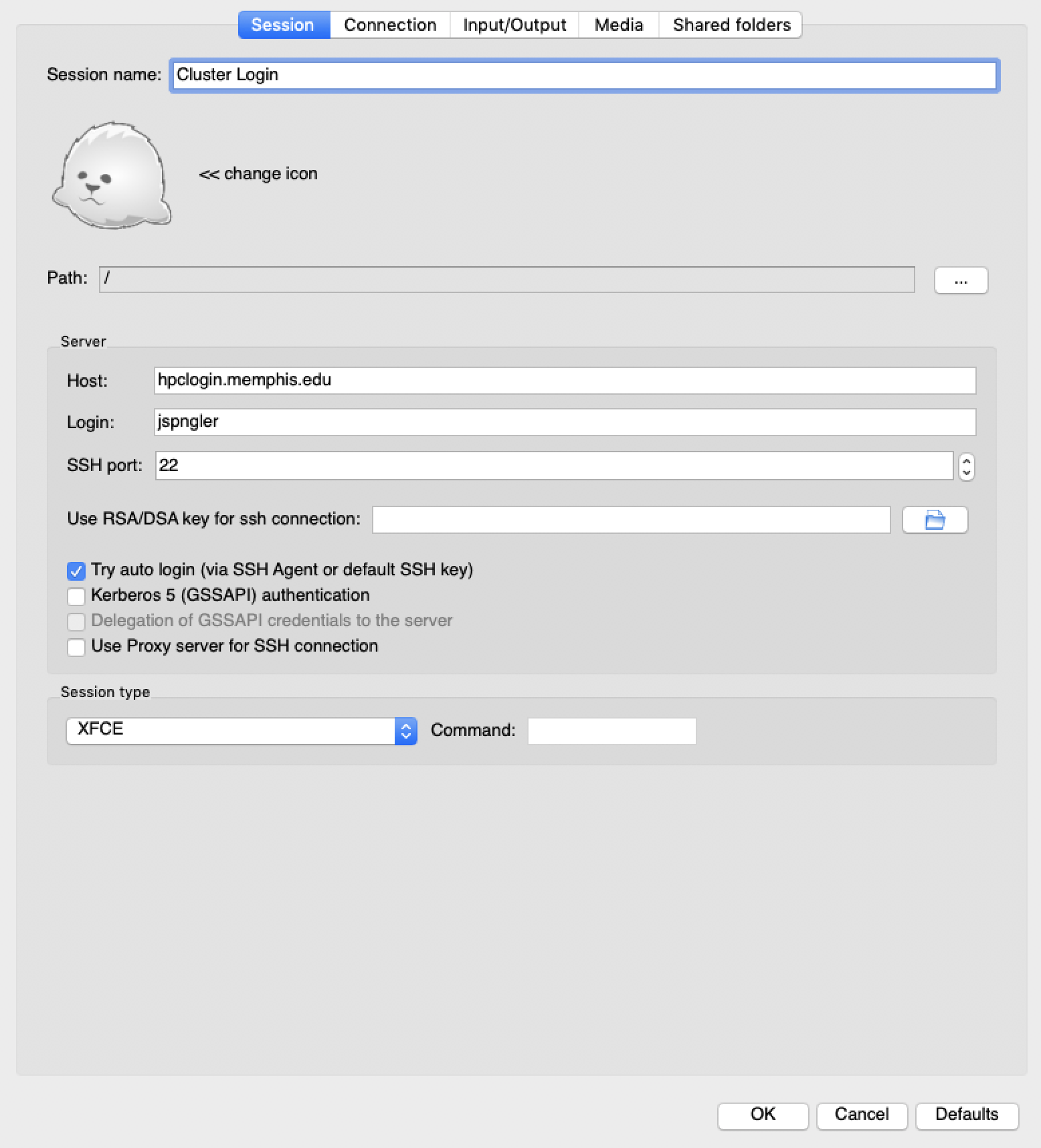

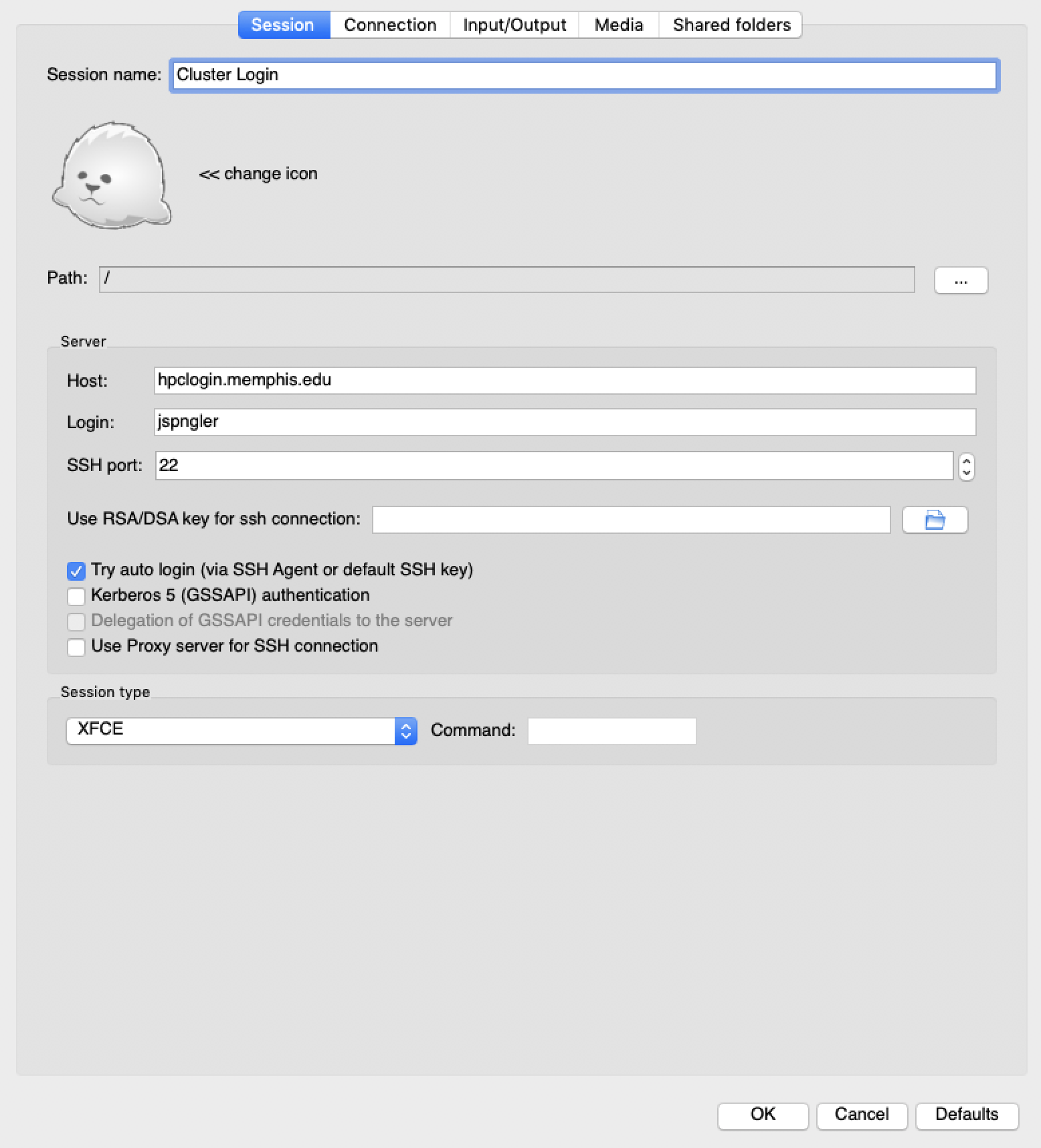

- Create a new session with:

- Session Name: Cluster Login

- Host: hpclogin.memphis.edu

- Login: your user id

- SSH port: 22

- *Set "Session Type" to XFCE

- Click OK

- Type in the "Session:" area "Cluster Login"

- Press Enter

- It will ask for your U of M password

- Now you should see the XFCE desktop!

Session settings (with UUID jspngler):

Session area dialog:

Login desktop:

Dear HPC user,

Starting next week, we will be making 2 adjustments to the cluster's scheduler:

Memory per CPU

First, on May 13, we will decrease the default memory allocation from 2 gigabytes

per CPU down to 1 gigabyte per CPU. For user's already using the --mem-per-cpu option for sbatch/srun/salloc commands, this will not impact your jobs. For user's using the default allocation,

it is unlikely to impact your job, but if you receive the error:

Exceeded job memory limit

You can increase the limit by resubmitting with --mem-per-cpu=2G included. In a batch script, you will need the following line:

#SBATCH --mem-per-cpu=2G

If you are unsure how much memory your job used, or repeatedly get this error even

after increasing the memory, run the following sacct command to get the appropriate value, as per the following example:

[jspngler@log001 ~ ]$ srun -c 1 -p computeq --mem-per-cpu=1M --pty /bin/bash

srun: Exceeded job memory limit

srun: Exceeded job memory limit

srun: Job step 207977.0 aborted before step completely launched.

srun: Job step aborted: Waiting up to 32 seconds for job step to finish.

srun: error: c032: task 0: Killed

[jspngler@log001 ~ ]$ sacct -j 207977 -o jobid,maxvmsize

JobID MaxVMSize

------------ ----------

207977

207977.exte+ 187568K

207977.0 323032K

[jspngler@log001 ~ ]$ srun -c 1 -p computeq --mem-per-cpu=323032K --pty /bin/bash

[jspngler@c006 ~ ]$

Job Submission Priority

Second, from May 13 to May 18,We will be modifying the job queue. Currently, jobs

are submitted on a first come, first serve basis, but we will attempt to adjust the

scheduling priority to favor shorter jobs. Next week, if your job sits in the PENDING state too long, consider adjusting the timelimit to a lower value via the scontrol command:

scontrol update job [jobid] timelimit=[DAYS]-[HOURS]:[MINUTES]:[SECONDS]

If you need more time on a job, send an E-mail to hpcadmins@memphis.edu with the jobId to request an increased timelimit.

With the update, you will be able to submit short jobs much quicker (by lowering the

timelimit) and see the priority factors affecting your job (via sprio command).

Kristian Skjervold (skjervld)

Mon 4/15, 2:22 PM

Reminder: Old, old home (data older than three and a half years) is going away next

week. See my previous two messages contained in this message for additional information.

Let me know if you have something you want help with.

Thanks,

Kristian

>>>>>>>>>>>>> 3/21/2019 message >>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

HPC Users,

I sent the message below back at the end of January. I wanted to remind you all that

after April 24th, the old data from 2012 – 2015 and earlier will be going away. If

there is a chance you have data there that you want to keep, please take a look and

copy it.

The mount point /oldoldhome on the machine hpcxfer is not very stable. So, if you

are having trouble, let me know and I can remount it.

Thanks,

Kristian

>>>>>>>>>>>>> previous message >>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

>>>>>>>>>>>>> January 2019 message >>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

We have old data available from the previous two HPC clusters.

The data in /oldhome is from the most recent Penguin cluster, 2015 – 2018, and we

plan on leaving that data there for the time being.

The data from the cluster before the last one, data from 2012 – 2015, which itself

contains some carry-over from HPC work earlier than 2012, is on hardware that is now

approaching seven years in age. That hardware is not under warranty. If the hardware

fails, the data could be lost.

We have made that data available to you (see below), and it will remain available

until Wednesday April 24th, 2019; that is the last day of classes for spring semester.

To review any data that you might have generated during this time period, you can

log on to a machine called hpcxfer.memphis.edu using SSH with your regular username/password.

Find your user directory in /oldoldhome. If you are the PI for a lab, you might investigate

the directories of members of your lab as well. If there is data that you would like

to keep, you can simply use SCP to copy it off to a location of your choice. If you

would like assistance, you can submit a helpdesk request and we can help you get that

data to a location of your choosing.

My belief is that there probably aren't too many things on /oldoldhome that are needed

anymore, but it is your data and you need the opportunity to take a look to be sure.

Take a look. Do what you feel comfortable doing. If you would like assistance of any

kind, don't hesitate to put in a helpdesk ticket.

Thanks,

Kristian

>>>>>>>>>>>>> previous message >>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

The following message has two corrections*

HPC Usage

Since January 3rd the cluster has been updated to better utilize resources. Last year,

jobs would allocate whole nodes with up to 10 jobs per node, but this year jobs allocate

two resources: CPU cores and Memory.

To use these resources, you can use the following submission combinations in the #SBATCH lines in your scripts or as part of the submission line for the commands sbatch or srun ... -p PARTITION --pty /bin/bash or salloc ..., where ... is one of:

- -c M or --cpus-per-task=M: Allocate M CPU cores on 1 node in a job.

- single core (or thread), single node, shared memory (SMP), OpenMP, pThreads, etc...

jobs

- -n M or --ntasks=M: Allocate M CPU cores on up to M nodes.

- MPI jobs for M greater than 1

- single core jobs for M equal to 1

- -n M -N S, or --ntasks=M --nodes=S: Allocate M CPU cores on at least* S nodes.

- MPI jobs for S greater than 1

- single node, SMP, jobs for S equal to 1

- -N M --ntasks-per-node=S, or --nodes=M --ntasks-per-node=S: Allocate S*M cores on exactly M nodes.

- MPI jobs for M greater than 1

- single node, SMP, jobs for S equal to 1

- -n M --ntasks-per-node=S, or --ntasks=M --ntasks-per-node=S: Allocate M CPU cores on at least* M nodes with a maximum of S tasks per node.

- MPI jobs

- -c M -n S, or --cpus-per-task=M --ntasks=S: Allocate M CPU cores per task for S tasks on up to S nodes.

- MPI jobs for S greater than 1

- single node, SMP, jobs for S equal to 1

Memory is requested with the following mutually exclusive options, where the default

is 4096 megabytes per CPU core on the computeq, gpuq, and bigmemq partition nodes:

- --mem=M: Use M megabytes per node.

- single node jobs

- multiple node jobs can use this, but if slurm allocates 1 core on one node and 40

cores on another you may run into problems

- --mem-per-cpu=M: Use M megabytes per CPU core. This is preferred for most jobs.

- single node jobs

- multiple node jobs

Also, if you go over the memory requested memory in your job, you will see an error

in the file specified in --error=file or in your terminal for interactive srun or salloc jobs. You can remedy this by using more memory. If you need more than ~180 GB per

node, try using the bigmemq partition with more memory requested. To see how much

memory was used (maxrss) and needed (maxvmsize) for your job run the command:

sacct -j ###### --format jobid,jobname,partition,account,alloccpus,state,exitcode,maxrss,maxvmsize --unites=M

for that job (where ###### is the jobid listed in squeue or sacct) to get memory in

megabytes. -j ##### can also be replaced by -u username to list all jobs run by a

specific user.

Department (--account or -A in job submission SBATCH line or srun, sbatch, salloc

commands)

Another note is that you shouldn't need to specify an account per job, as the accounts

specified by the command:

sacctmgr show assoc tree

will be used. If you don't see your username in the output or don't feel like the

placement is up to date, please contact us at hpcadmins@memphis.edu.

HPC Help, Software, and Account Requests

We also have a new ticket system for cluster problems.

- Go to topdesk and select the "Use Self-Service Portal" to login.

- Select "Research and HPC Software".

- From there you can select one of two options:

- "HPC Account" to

- "Request an HPC Account"

- "Request an HPC Consultation Session"

- "HPC Software" to

- "Request HPC Software Installation"

- "Request HPC Software Assistance".

If you have any questions, comments, or need to request more time on a job, please

contact us at hpcadmins@memphis.edu.

If you run into any problems using your account or software on the cluster, please

submit a request in topdesk.

Hope you have a great experience with the HPC in 2019!